Created in conjunction with the Valohai team.

Machine Learning is a trend that’s here to stay. The survey of people in North America, Europe, and APAC shows that 28% think the ML trend will significantly impact society as a whole. Regarding personal impact, only 24% of respondents don’t think machine learning will have any influence on their life. (Granted, those people probably don’t realize that ML already affects their life whenever they use Google, Uber, or watch yet another show on Netflix.)

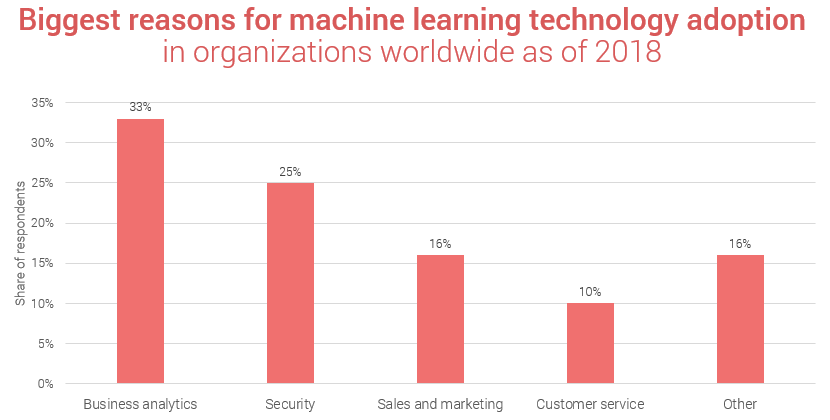

[Source: Statista]

However, while machine learning is something that can be applied to each industry (given the desire and resources), it is not as straightforward as we’d like. Can something be done about it? For sure.

What’s the problem with the machine learning workflow?

The problem with production-level machine learning (at the current stage as it is) is three-fold:

- Setting up and maintaining ML Infrastructure requires a lot of manual work

- There aren’t any universal standards

- Machine learning experiments lack version-control

Too much manual work

One example of the abovementioned manual work is related to machine orchestration. Currently, data scientists need SSH for a server, install the latest Nvidia drivers, Python dependencies, as well as clusters of 100 GPUs hosting Docker containers to get the training running. These and many other steps in the machine learning pipeline from feature extraction to training and deployment result in so-called glue code.

When the time comes for machine learning code itself, it takes up to 5% of the project. When you have separate packages for various features and functionalities like data analysis and verification, configuration, infrastructure, etc., a lot of manual work is required to get the data you need from all these sources.

No universal standards

The other challenge is that everyone’s got their own standards when it comes to machine learning. It might be personal preferences on how to keep records of hyperparameters (in case you’re working with ML algorithms yourself), or it can be corporate standards about the tooling.

However, the issue remains – the use of various technologies and non-integrated teams create havoc on the global market. For example, the IT department is responsible for one part of the pipeline, Data Engineers are working on another piece, while Data Scientists are trying to manage the entire thing while none of them have a clear view on what the others are doing and with what technology. This leads to a situation where Data Scientists need to relearn everything every time they switch projects.

No version-control

Finally, there’s the issue with version control that is a ground-rule in software development but not yet in machine learning development. When data scientists run an ML experiment, usually they just store the training code (the input) and the trained model (the output).

When it’s the scale of a presentation you’re working on with your teammates, you can easily find out who changed what. When it’s an ML algorithm that is being trained, the implications of the environmental impact, for example, can alter the training process in an unexpected way.

Version control should be present in every experiment to be able to reproduce the results or give an explanation of how the model was built.

About Valohai Deep Learning Management Platform

The APP Solutions team has learned about the Valohai product at the Web Summit 2018, and we immediately felt that this is something we’d like to learn more about.

Valohai is a deep learning management platform that automates deep learning infrastructure for data science teams.

The features include:

- Zero setup infrastructure: depending on the nature of your project and preferences, you can train your models in the cloud or on your servers with the click of a button.

- Built-in version control: reproduce any previous run with integrated version control for input data, hyperparameters, training algorithms, and environments.

- Numerous integration options: the platform is tool-agnostic. Whether your project uses Excel, TensorFlow, Python, Darknet, GitHub, Docker, and any other tools for the machine learning models training, the Valohai DL management platform can be used with any runtime.

- Pipeline automation: Valohai relies on API first development. Therefore you can easily integrate your machine learning pipeline into the existing development pipeline.

- Standards, finally: the workflow is standardized in Valohai so that you benefit from the industry best practices that help such giants as Facebook, Netflix, and Uber work.

- Monitoring and visualizations: visual feedback from the training’s performance and monitor all data in real-time.

- Easy scalability: Run models on hundreds of CPUs and GPUs with a push of a button.t.

- Deployment: Find the best models and deploy them to the production straight from Valohai

- Teamwork: Have a transparent view of what experiments others are doing

Valohai Featured Cases

One of the prominent cases of Valohai deep learning management platform implementation was real-time detection of sexual abuse materials from Darknet. The sensitive training material is seized so even the machine learning developers can’t see the material themselves and the training environment is running as an air-gapped on-premise installation.

Due to the size of a typical data set for this particular project (around 30Gb on average or up to twice as much for the largest one), the standard ways of training the models were too cumbersome. With the introduction of Valohai’s platform, the machine learning models could be continuously retrained while the resources were efficiently used as they were needed. You can read more about this case here.

Another case was related to logistics – creating and speeding up the model training for a self-driving ferry prototype. The team of two, with the help of Valohai DLP, shortened the training time from 2 weeks to 3 days. Read more about this case here.

The APP Solutions Machine Learning Expertise

The APP Solutions provides not just standard web & mobile development, but also data analytics and machine learning projects.

Currently, we have experience in healthcare, communication, and user behavior projects that implement ML features. We also have projects in Ad Tech and Financial Services that require machine learning as well to detect and fight fraudulent activities, which is why we think the APP Solutions and Valohai partnership is a win-win situation for everyone involved.

“It was the answer to so many of our questions, especially the version control. After all, when you, for example, train a machine learning model to distinguish between diseases or DNA sequences, it’s important to monitor everything it’s learning from and make sure the models are easily scalable because the stakes in healthcare are incredibly high,” says Mykola Slobodian, CEO of the app development company APP Solutions.

Want to receive reading suggestions once a month?

Subscribe to our newsletters